A couple weeks ago I highlighted the recommendation that researchers test their models (and the processes which generated them!) against random noise. This is an important “reality check” of their methods, to see how susceptible they are to detecting something in nothing. In the video above, Jimmy Kimmel gives a nice illustration of how this idea could be extended to a taste test, or any survey where participants are asked to differentiate between samples. Kimmel’s experiment also gives a nice illustration of how humans can be primed to find what we expect to find, even if it’s not there.

epistomology

31

Oct 12

Recommendation of the week

“[I]f you have performed any statistical analysis that is more complex than calculating the mean and the standard deviation, you should perform the same analysis on noise to make sure that whatever effect you observe is indeed a unique feature of your data and not an artefact of the analysis.”

Found this one over at Stefan’s sieste blog. I couldn’t agree more, especially now that computers and big data sets entice us to make ever more complex models. Oh, and that’s not a bad thing! As I’ve argued, we’ll need to give up on simple, easy to interpret models in order to get more predictive power.

I’d go even more meta than Stefan and argue that you should re-test your entire model-creating process on noise (perhaps he meant this with his quote). If you started with a data set, then ran a stepwise variable selection algorithm, then added in a new non-linear term to get a better fit, do the same on noise, trying to get the best fit. Are you able to get a statistically significant result? Better still, run the same procedure on different types of noise, not just Gaussian White (I know, sounds like something you’d load into a syringe. Normality, the gateway drug?).

23

Oct 12

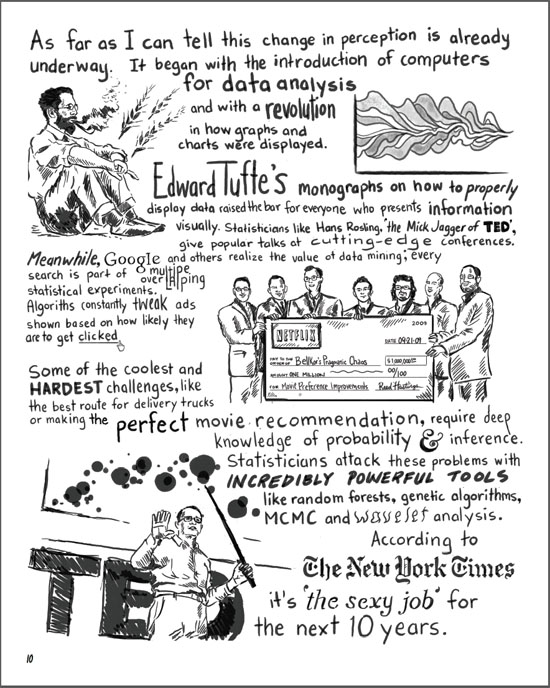

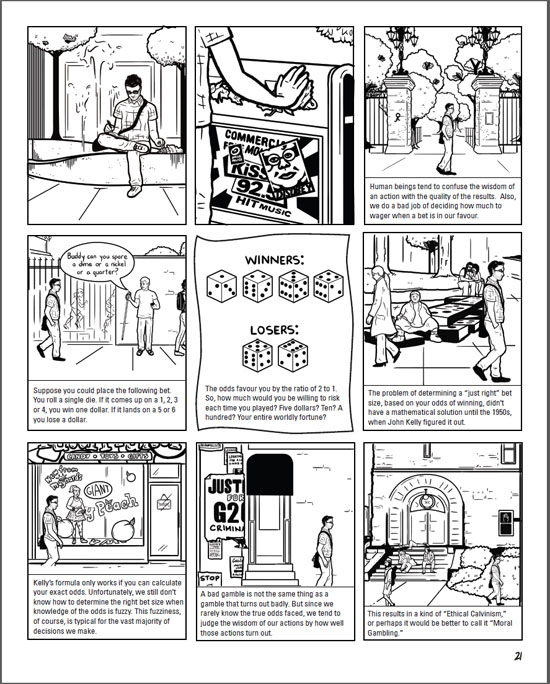

Comic with stats discussion

I recently finished work on the first issue of a graphic novel. It’s in the form of a fictional first person narrative. The story isn’t directly about statistics, but there are a few digressions on the subject. Here are some samples, make sure to click on the images for a larger view:

If you’re interested, head over to sunfalls.com and pick up a copy. Here’s the order page. The comic comes with a full money-back guarantee, including shipping. You don’t even have to send back your copy to claim the refund.

13

Oct 12

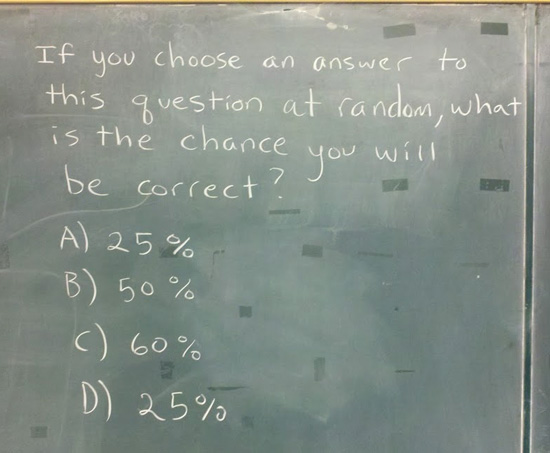

If you choose an answer to this question at random, what is the chance you will be correct?

19

Jun 12

Manifesto update

A small one in terms of words, but lots of thought has gone into this addition:

Correlation proves compatibility.

Negative correlation implies incompatibility.

As Ned Ryerson would ask, “Am I right or am I right?”

2

May 12

May Manifesto addendum

Just added another statement to my manifesto. Here is the full text:

Interpret or predict. Pick one. There is an inescapable tradeoff between models which are easy to interpret and those which make the best predictions. The larger the data set, the higher the dimensions, the more interpretability needs to be sacrificed to optimize prediction quality. This puts modern science at a crossroads, having now exploited all the low hanging fruit of simple models of the natural world. In order to move forward, we will have to put ever more confidence in complex, uninterpretable “black box” algorithms, based solely on their power to reliably predict new observations.

Since you can’t comment to WordPress pages, you can post any comments about my latest addition here. First, though, here is an example that might help explain the difference between interpreting and predicting. Suppose you wanted to say something about smoking and its effect on health. If your focus is on interpretability, you might create a simple model (perhaps using a hazards ratio) that leads you to make the following statement: “Smoking increases your risk of developing lounge cancer by 100%”.

There may be some broad truth to your statement, but to more effectively predicts whether a particular individual will develop cancer, you’ll need to include dozens of additional factors in your model. A simple proportional hazards model might be outperformed by an exotic form of regression, which might be outperformed by a neural network, which would probably be outperformed by an ensemble of various methods. At which point, you can no longer claim that smoking makes people twice as likely to get cancer. Instead, you could say that if Mrs. Jones —a real estate agent and mother of two, in her early 30s, with no family history of cancer — begins smoking two packs a day of filtered cigarettes, your model predicts that she will be 70% more likely to be diagnosed with lounge cancer in the next 10 years.

The shift taking place right now in how we do science is huge, so big that we’ve barely noticed. Instead of seeing the world as a set of discrete, causal linkages, this new approach sees rich webs of interconnections, correlations and feedback loops. In order to gain real traction in simulating (and making predictions about) complex systems in biology, economics and ecology, we’ll need to give up on the ideal of understanding them.

23

Feb 12

A classification scheme for types of randomness

We often speak implicitly of different types of randomness but neglect to name or categorize them. Consider this post to be a kind of white paper or rough draft on the division of randomness into five categories. If you start using these distinctions explicitly, even if only in your own head, I think you will find them highly useful, as I have.

Type 0: Fixed numbers or known outcomes

Type 0 randomness is the special case of randomness where the data are already known. Any known outcome, regardless of the process that generated it, is Type 0 randomness. Once known, it has become a constant. In terms of information conveyed, all Type 0 randomness has zero informational entropy (a measure of uncertainty), and all messages with zero entropy are examples of Type 0 randomness.

Type 1: Pseudo random.

Most computers generate random numbers by a deterministic process. An initial “seed” is picked using some environmental factor, like the microsecond timing of the CPU, and from there onward every number that follows is fully determined by the algorithm. These algorithms can be very good, in terms of producing sequences of numbers that have desirable qualities. Yet, if you know that the sequence comes from some variation of, say, the Mersenne Twister, then a single number or short sub-sequence might be enough to predict all the subsequent numbers. Even if you can’t guess at the underlying mechanism, algorithms like the Mersenne Twister eventually loop: once you’ve seen the whole sequence, all future numbers will be known exactly, and you will have Type 0 randomness.

Computer software isn’t the only source of Type 1 randomness. Card shuffling machines, if sufficiently precise in their operation, map each unique ordering of playing cards to a single final ordering. Learn how the machine works, and you will know how each initial ordering is transformed.

The key to Type 1 randomness is that it is fully reducible to Type 0, in principle. The data source is known to be determinate, but the code is yet to be cracked. With enough time, attention, or technical sophistication, the sequence can be fully mapped.

Type 2: Non-fully reducible

Most real world randomness, and in general the most interesting sources of randomness, are of Type 2. Data streams of Type 2 randomness are conditionally random in the sense that we are able to reduce the uncertainty related to them, but only up to a certain point. Our model predicts the value of some response variable based on the other data, and this prediction can be quite good. But with Type 2 randomness there will always be some uncertainty left over, conditional on us making the best prediction we can.

A typical example of Type 2 randomness would be predicting whether certain individuals will develop heart disease within the next 10 years. Without knowing any specifics about the individuals, it’s very hard to make accurate predictions. Once we know some basic data, such as age, sex, and weight, we can make a better prediction. Even more fine-grained detail — history of smoking, diet, exercise patterns — allow us to make even better predictions. Each study or experiment we do, if of sufficient quality, improves the predictions we are able to make. Yet the randomness is non-fully reducible in the sense that, no matter how good our prior information or model, we will never be able to predict with 100% certainty whether a person will develop heart disease.

Regression curves are attempts to understand Type 2 randomness by separating signal (the model, or conditionally determinant, part) and noise. Often this noise part is modeled with some maximum entropy distribution, like the Gaussian. This is our way of recognizing that beyond some limit, we can no longer reduce the randomness. There will always be some Type 3 randomness left over.

Type 3: Martingale random

One way to think about Type 3 randomness is to imagine a fair bet. If the true probability of an event happening is 1/2, then 1 to 1 odds make it martingale random. There’s nothing you can do to improve your expected return to above zero; nor is there anything you can do to decrease your exception to below zero. In a series of independent fair bets, strategy is irrelevant to expectation. Importantly, this doesn’t prevent you from adjusting the probability distribution for payoffs, if you are able to vary wager amounts and stopping times. For example, you could try the martingale betting strategy, which offers a high probability of making small gains in exchange for a small chance of catastrophic loss.

Martingale randomness implies that there is no disconnect between the “advertised” distribution and the true (or revealed) distribution. The theoretical “fair coin” you meet in textbooks is martingale random. Of course you have to be very careful in how you interpret the results of a real coin toss in terms of informational content. Maybe it isn’t martingale random after all!

Type 3 randomness is not limited to situations in which you have two equally probable outcomes. Anytime you are unable to reduce randomness beyond a particular limit of predictability, what’s left over is martingale randomness. In fact, through a process of “whitening,” signals that generate non-uniform randomness can be converted into uniform randomness. The opposite can be accomplished as well (though I’ve never heard it called “blackening”).

Type 4: Real randomness.

This is the real thing: baked-in, irreducible randomness. For a data source to be Type 4 random, it must be martingale random and it must come from a sequence that is not only unknown, but a priori unknowable. If Type 4 randomness exists, then God plays dice; randomness is “baked in” to the universe.

I suspect that if Type 4 randomness really does exist, then it will be impossible to prove.

General thoughts on types and some examples

The most important thing to note about these categorizations is that the type of randomness depends on your perspective. The cards you hold in your hand are Type 0 randomness to you, but to the person sitting across the poker table from you, they are Type 2 randomness. Your opponent can use any number of tools to try and do better than pure chance at guessing your hand (how much you bet, the look in your eyes, and of course the cards they hold). The type of randomness you perceive is a function of what you know.

All degenerate random variables (i.e. the indicator function for the entire sample space, which is always 1) are Type 0 randomness.

Most of the games we play have some element of Type 2 randomness. Kids will play games with Type 1 randomness, like War, which is deterministic for any given card shuffling, and could in theory be mapped out. Type 1 randomness can still be surprising to you, but if there is any skill involved it would have to be Type 2 randomness: entropy reducible in theory.

The concept of Type 3 randomness is connected with two important statistical concepts: sufficiency and coherence. Once you know the sufficient statistics from a data source (and, vitally, assuming your model about the data is correct), threre’s nothing more you can do to improve your confidence intervals or ability to make predictions. For example, if you know that your data source has a Poisson distribution and the points are uncorrelated, then once you know the mean, there’s no other piece of information that can improve your ability to predict new values from the distribution. Broadly speaking, martingale randomness satisfies the de Finetti conditions of coherence, in that odds assignments must match up with known probabilities, and internal consistency needs to be maintained.

If your dice are loaded, then you’ve got a generator of Type 2 randomness. Over time, you can make better and better predictions about how often the different numbers will come up. But you still won’t be able to predict, with certainty, the results of any given roll. If somehow you knew the exact probabilities for each face, then you could use these dice as a generator of Type 3 randomness.

In a sense, the very first number generated by your computer’s random number algorithm is martingale random. There’s no way, unless you know how the seed is generated from the CPU’s timing and can “see” the microseconds tick by, to predict the range in which that number will fall with greater accuracy than would be expected by chance alone. On the other hand, it could be argued that the decimal part of the CPU’s clock isn’t really uniformly distributed. There will be some slight bias towards lower numbers, which is natural for any distribution of numbers that “grows” in size, even if it cycles (see Benford’s Law, and note that it applies not just to the first digit of a number, but to secondary digits as well). With enough careful investigation, you might be able to convert that first seeded random number into a case of Type 2 randomness.

Type 3 randomness is the holy grail of randomization. Casinos want dice which are perfectly symmetric in weighting, and resistant to wear and tear that might cause bias. Assignments to treatment in a clinical trial should strive for martingale randomness. Failure to achieve martingale randomness, when it is required, can have highly negative consequences.

“Beating the house” at a casino involves turning Type 3 randomness into Type 2 randomness, with enough usable signal left to overcome the casino’s inherent advantage. Strategies like analyzing roulette spins to find bias, and most famously counting cards, have been successfully used. One group of geeks was able to turn the randomness of a Vegas lottery machine which followed a Type 1 sequence into Type 0. They made the tactical mistake of hitting two huge jackpots in a row, tipping off the casino that they had successfully cracked the code. From the casino’s perspective, you have an inverse classification problem: given how well players are doing, what can you infer about the type of randomness they are detecting? Those jackpot-winning geeks could have taken a lesson from code breakers in WWII, who thought carefully about how to use the information contained in the cracked messages without showing the Germans that their code had been broken and that the allies understood their messages as more than the white noise of Type 3 randomness.

Because what separates the Types is our knowledge, randomness can come from a generation process that is completely deterministic and known to others (Type 0). As far as I’ve been able to tell, the digits of pi (beyond the ones you have memorized) are martingale random. If I give you a sequence of digits, and tell you they come from somewhere after the trillionth digit of pi, and let you use any tools you want short of a computer, there’s nothing you could do in a single lifetime to predict additional digits with an accuracy greater than 1/10th. Note that while the tail digits of pi appear to be a source of martingale randomness, not all irrational (or even transcendental) numbers have unpredictable digits. As counterexamples, see Louisville’s or Chapperhorn’s numbers. Any data source of Type 2 or greater must be incompressible, in the sense that if the sequence has infinite length, no finite-length description of it can exist. If there were a finite-length algorithm that could re-create (or predict) the sequence, then it’s at most Type 1 randomness (until we figure out this algorithm, if need be by iterating over all possible algorithms, starting with the shortest, until we get there).

I’ve tried to make these categorizations as clear as possible, but there are still edge cases which are hard to place. As is always the case, the closer you look, the fuzzier things get. However, I think you will still find this categorization of randomness to be quite useful, especially as a tool to discuss edge cases. Consider for a moment Chaitin’s Omega, which is the probability (weighted by string length) that a given computer program, run in a fixed computing environment, halts. The first few digits of Omega, determined by very short programs which instantly halt or loop, are easy to figure out. But we know from Turing that the halting problem is, in general, undecidable. So at some point, the digits of Omega become unknown and unknowable. Nor can we know when they become unknowable! The digits of Omega make the transition from Type 0 randomness (known) to Type 1 randomness (we just need to run the programs and see if they halt or loop), to Type 2 randomness (we may be able to set upper and lower bounds for the next few digits, or make a likelihood prediction based on reasoning and past experience), to Type 3 randomness (only God could predict the 10,000th digit with better than chance accuracy) and perhaps, almost frighteningly, to Type 4 randomness (God’s in a back alley, shaking up the dice right now).

3

Dec 11

The first thing you learned about probability is wrong*

I’ve just started reading Against the Gods: The remarkable Story of Risk, a book by Peter Bernstein that’s been high on my “To Read” list for a while. I suspect it will be quite interesting, though it’s clearly targeted at a general audience with no technical background. In Chapter 1 Bernstein makes the distinction between games which require some skill, and games of pure chance. Of the latter, Bernstein notes:

“The last sequence of throws of the dice conveys absolutely no information about what the next throw will bring. Cards, coins, dice, and roulette wheels have no memory.”

This is, often, the very first lesson that gets presented in a book or a lecture on probability theory. And, so far as theory goes it’s correct. For that celestially perfect fair coin, the odds of getting heads remain forever fixed at 1 to 1, toss after platonic toss. The coin has no memory of its past history. As a general rule, however, to say that the last sequence tells you nothing about what the next throw will bring is dangerously inaccurate.

In the real world, there’s no such thing as a perfectly fair coin, die, or computer-generated random number. Ok, I see you growling at your computer screen. Yes, that’s a very obvious point to make. Yes, yes, we all know that our models aren’t perfect, but they are very close approximations and that’s good enough, right? Perhaps, but good enough is still wrong, and assuming that your theory will always match up with reality in a “good enough” way puts you on the express train to ruin, despair and sleepless nights.

Let’s make this a little more concrete. Suppose you have just tossed a coin 10 times, and 6 out of the ten times it came up heads. What is the probability you will get heads on the very next toss? If you had to guess, using just this information, you might guess 1/2, despite the empirical evidence that heads is more likely to come up.

Now suppose you flipped that same coin 10,000 times and it came up heads exactly 6,000 times. All of a sudden you have a lot more information, and that information tells you a much different story than the one about the coin being perfectly fair. Unless you are completely certain of your prior belief that the coin is perfectly fair, this new evidence should be strong enough to convince you that the coin is biased towards heads.

Of course, that doesn’t mean that the coin itself has memory! It’s simply that the more often you flip it, the more information you get. Let me rephrase that, every coin toss or dice roll tells you more about what’s likely to come up on the next toss. Even if the tosses converge to one-half heads and one-half tails, you now know with a high degree of certainty what before you had only assumed: the coin is fair.

The more you flip, the more you know! Go back up and reread Bernstein’s quote. If that’s the first thing you learned about probability theory, then instead of knowledge you we’re given a very nasty set of blinders. Astronomers spent century after long century trying to figure out how to fit their data with the incontrovertible fact that the earth was the center of the universe and all orbits were perfectly circular. If you have a prior belief that’s one-hundred-percent certain, be it about fair coins or the orbits of the planets, then no new data will change your opinion. Theory has blinded you to information. You’ve left the edifice of science and are now floating in the either of faith.

23

Nov 11

Monty Hall revisited

Chances are you’ve already heard about the Monty Hall problem. I wouldn’t be mentioning it at all, except that I keep reading descriptions of the problem that miss the absolutely critical point. For those who are new to the problem, here’s a summary:

Suppose you’re a contestant on a game show. The host, Monty Hall, shows you three numbered doors. Two of these doors hide goats, which you don’t want, and one of them hides a shiny new convertible, which you do. Pick the right door and you go home with the convertible, pick the wrong door and you get the goat (which I suspect they don’t even really give you). You make your best guess and choose a door. But before showing what’s behind it, Mr. Hall opens one of the other two doors to reveal a goat. “Now”, he asks, “do you want to stick with your original choice, or do you want to switch doors to the other one that hasn’t been opened yet?”

While you try desperately to remember the rules for conditional probability, the studio audience yells out suggestions and an attractive model smiles at you, making you wonder if you should ask if she comes with the car, but then you realize she probably gets that question all the time. Time is running out! Should you switch doors?

The correct decision, at least in terms of maximizing your chances of winning the car (but, alas, not the model), is to switch. IQ Test Grand Champion and writer Marilyn Vos Savant famously answered the question in one of her columns. Her answer, that you should switch, was widely controversial. The math behind the solution is surprisingly simple, though it rarely seems be presented in a simple way. Your first guess has a one-in-three chance of being right. That means your first guess has a two-in-three chance of being wrong. If your first guess was wrong, that means the car must be behind one of the other two doors. Since Monty just showed you the goat, the car must be behind the other door. Switch and you will get the car for sure. If you don’t switch, your chance of winning remains one-in-three. If you do switch, it jumps up to two-in-three. So ignore the studio audience and don’t get distracted by the model. Just call out the number of that other door!

But wait! Did you catch the missing assumptions needed to make this solution work? The big one, for me, is that Monty Hall will always follow the same procedure of opening up a door with a goat, regardless of what’s behind the door you picked. If you distrust Monty, you might suspect that he will only show you a goat when you’ve picked the car, in order to entice you to switch and loose the car. In that case you should stick with the door you have. Or perhaps Monty shows the goat more frequently when the car is picked first (but not all the time), in which case switching may or may not be the best strategy.

The part where I yell

The problem here is that the Monty Hall problem MAKES NO SENSE WITHOUT AN EXPLICIT PRIOR on Monty Hall’s behavior. Sorry for the yelling, but the point is too important to miss. In this case, the prior is your belief about the procedure Monty is using, and how strongly you hold that belief to be true. The notion of a “prior” might be difficult to explain to a general audience, but assuming a particular one without stating it directly is poisonous. The Monty Hall problem, like many others, can’t be turned into math without first assuming some kind of probability distribution for the inputs.

Usually, when one the distributions of an input isn’t specified, we tend to assume that every possible option has an equal chance of occurring; in other words that we have a uniform probability distribution. This makes sense for another hidden assumption in the problem — that either the game show contestant has made his first pick randomly, or that the prizes were placed behind doors randomly. Though even here I tend to agree with mathematical historian Byron Wall, who argues that our default assumption of a set of equally likely events is problematic. But in the case of the Monty Hall problem, there’s no uniform to even assume. The set of possible ways that Mr. Hall could decide to act is infinite and unknowable.

How does Hall pick between the goats?

Another hidden assumption is that Monty randomizes which door to reveal if the unpicked doors are both hiding goats. If he didn’t, and you knew for sure that Monty would always pick the door with the lower number if when he had a choice, then the math works out differently. Now, if you pick door number 1 and Monty shows you a goat behind door number 3, you know for sure car must be behind door number 2. Switching guarantees you a win! If you pick door number 1 and Monty opens door number 2, that could mean either a car or a goat is behind door number 3. To calculate your odds of winning by switching, you can use Bayes’ theorem to find the probability that a car is behind door number 3, given that Monty reveled a goat behind door 2.

Work out the math, and you should get one-half. In other words, if Monty shows you door number 2, and if he’s using the rule stated above, then switching doors gives you a one-half probability of winning, as does staying with the door you have. It doesn’t matter. No matter which door Monty reveals, switching your pick is never worse than not switching, and sometimes it’s better to switch. That means it’s what game theorists call a dominant strategy, one you would always want to employ. Even so, since Monty’s door revealing rules can change your odds of winning, this is another hidden assumption that should have been made explicit.

Back when goats were golden

When the Monty Hall problem was originally described to me, I assumed that Monty had chosen a door to reveal at random, and that this door just happened to contain a goat. Perhaps not the most reasonable assumption to make, but at the time I was still young enough to think that winning a goat might be cooler than winning some K-car convertible (hum… maybe I still believe that). At any rate, I didn’t have the skills to work out a solution under my assumptions back then, but doing it now takes just a little bit of work.

The probability that you will win after switching, given that Monty “accidentally” reveals a goat, is actually the sum of two other probabilities. The first probability, that you will win by switching if both of the other doors contain goats, is zero. The second is the probability that only one of the two others doors was hiding a goat, in which case you will win for sure, since we already assumed that Monty revealed a goat. Because we know that Monty picked a goat by accident, we gain no additional information about the door we picked or the alternative we might switch to. Each one is equally likely to have the car, so switch or not, our probability of winning is one-half.

If you find this explanation confusing, you might want to try Jeffrey Rosenthal’s explanation, which shows how to re-normalize probabilities of events within your target condition.

The Man Who Loved Only Bayes

After publishing her solution, Vos Savant was flooded with letters telling her she got it wrong. I suspect that many of those readers were ignorant of her assumptions, though Vos Savant says that most people fully understood the problem, and simply didn’t accept her solution. One of the few accounts to mention the importance of Monty Hall’s procedural rules, even though that part only comes after 8 pages of discussion, is in Paul Hoffman’s “The Man Who Loved Only Numbers”. To explain why so many people, many of them with advanced degrees, got it wrong, Hoffman quotes mathematician Andrew Vázsonyi:

“Physical scientists tend to believe in the idea that probability is attached to things. Take a coin. You know the probability of a head is one-half. Physical scientists seem to have the idea that the probability of one-half is fused with the coin. It’s a property. It’s a physical thing. But say I take that coin and toss it a hundred times and each time it comes up tails. You will say something is wrong. The coin is false. But the coin hasn’t changed. It’s the same coin that it was when I started to toss it. So why did I change my mind? Because my mind has been upgraded with information. This is the Bayesian view of probability. It took me much effort to understand that probability is a state of mind.”

I might view probability more in terms of degrees of (rational) belief, but the Vázsonyi quote highlights a key component missing in much of science: the direct recognition that you have a prior, and that this prior is a form of bias, very often baked right into the model you have chosen. There is no escape from this bias! The frequentest approach to probability is really just a special case within the world of Bayesian inference, where you have picked an uninformative (or minimally informative?) prior. But even here you have to model the prior. You have to know: how are we assuming that Monty Hall makes his decision about showing the contestant a goat? Is it based on some fixed probability regardless of which door the contestant picks? Does Monty consult the entrails of a chicken? As mentioned before, the world of possibilities is infinite, and no progress can be made in terms of our understanding until we delineate a space in which our prior beliefs will live. Only once we’ve done that, implicitly or (preferably!) explicitly, can we test out our beliefs, and update them based Monty Hall’s actions.